|

I am a third year PhD student studying computer science at UT Austin advised by Prof. Purnamrita Sarkar and Prof. Kevin Tian . Broadly, my research interests lie at the intersection of statistics and machine learning. I am interested in designing machine learning algorithms with provable guarantees of convergence and correctness. I am also interested to work on quantifying the computational and statistical aspects of state-of-the-art deep learning methods. I deeply enjoy studying the applications of high-dimensional statistics, optimization and probability theory to real-world problems. Previously, I was an undergraduate student at the Computer Science and Engineering Department, IIT Bombay . I previously worked with Prof. Suyash Awate on Medical Imaging and with Prof. Preethi Jyothi on NLP for Code-switching at IIT Bombay. I have also worked with Prof. Thomas Deserno on Medical Informatics and Imaging, back in 2018. Email / CV / Github / Google Scholar / LinkedIn |

|

|

|

|

|

|

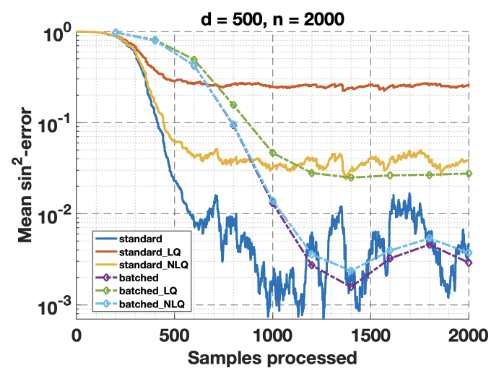

Syamantak Kumar, Shourya Pandey, Purnamrita Sarkar NeurIPS, 2025 Paper link We study Oja's algorithm for streaming PCA under linear and nonlinear stochastic quantization and show that a batched version achieves the lower bound up to logarithmic factors under both schemes. |

|

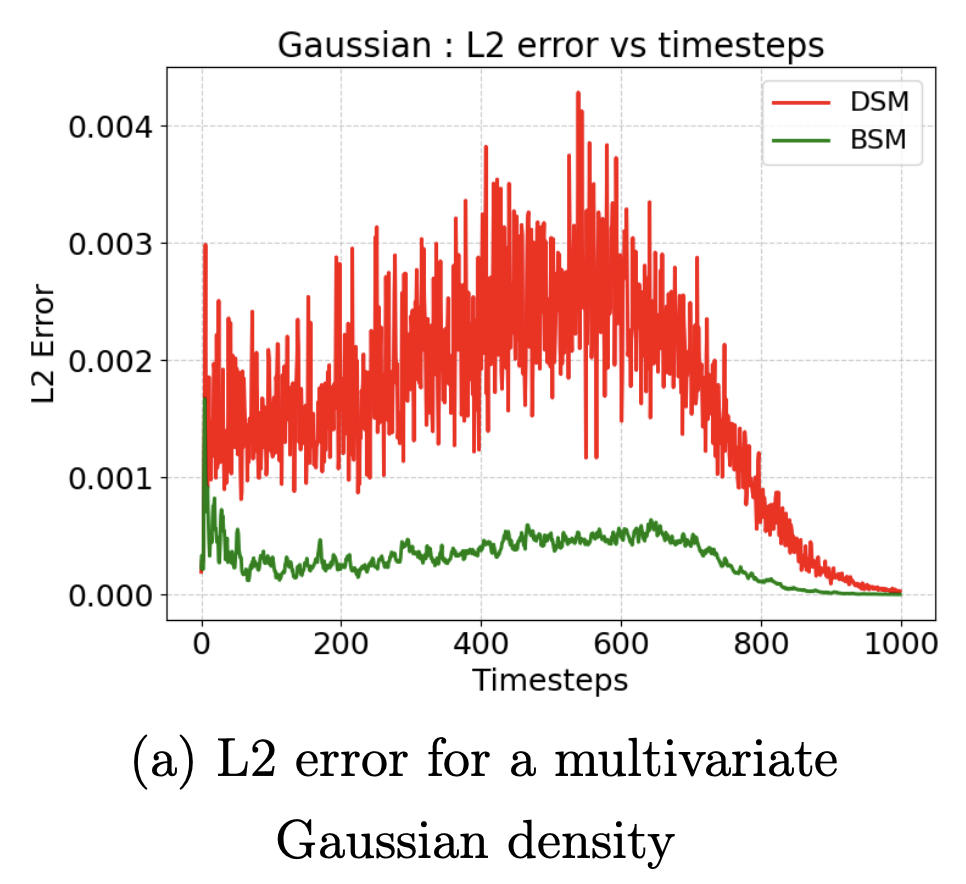

Syamantak Kumar, Dheeraj Nagaraj, Purnamrita Sarkar NeurIPS, 2025 Paper link We establish the first (nearly) dimension-free sample complexity bounds for learning these score functions, achieving a double exponential improvement in dimension over prior results. Building on these insights, we propose Bootstrapped Score Matching (BSM), a variance reduction technique that utilizes previously learned scores to improve accuracy at higher noise levels. |

|

Syamantak Kumar, Daogao Liu, Kevin Tian, Chutong Yang NeurIPS, 2025 Paper link We give an near-linear time differentially-private algorithm for computing the geometric median. |

|

Syamantak Kumar, Purnamrita Sarkar, Kevin Tian, Yusong Zhu COLT, 2025 Paper link We give the first provable algorithms for spike-and-slab posterior sampling that apply for any SNR, and use a measurement count sublinear in the problem dimension. |

|

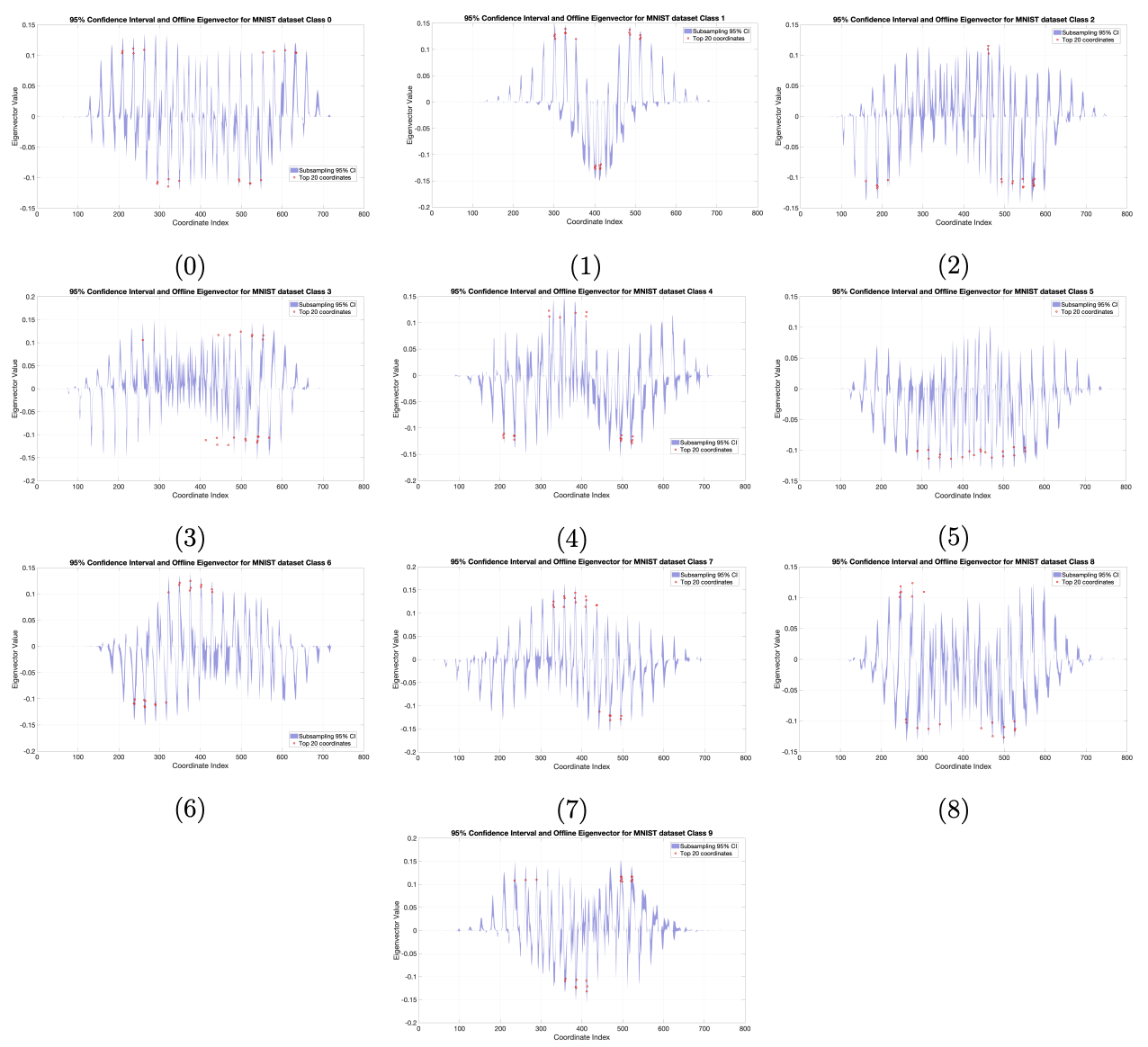

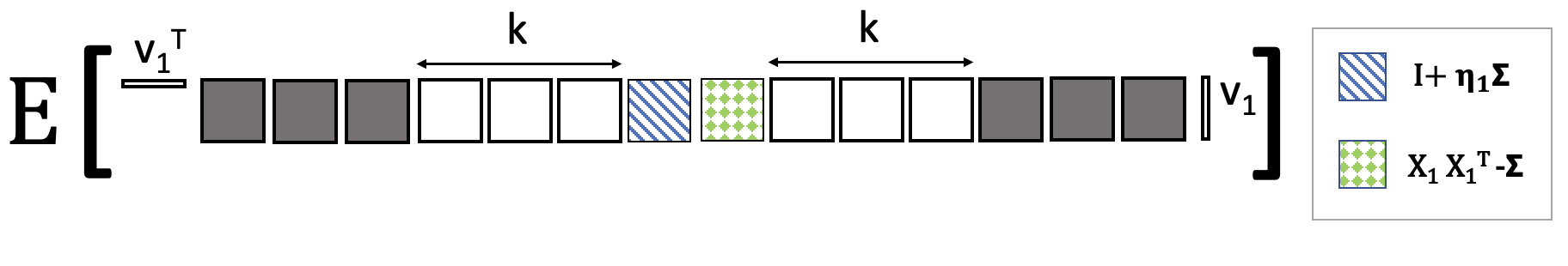

Syamantak Kumar, Shourya Pandey, Purnamrita Sarkar UAI, 2025 Paper link We propose a novel statistical inference framework for streaming principal component analysis (PCA) using Oja's algorithm, enabling the construction of confidence intervals for individual entries of the estimated eigenvector. |

|

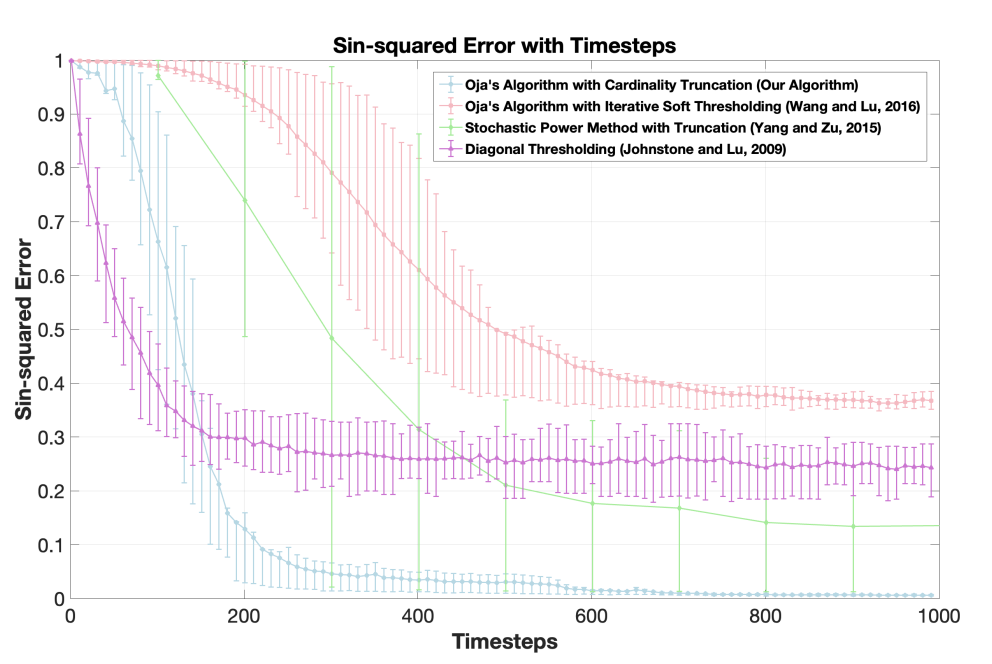

Syamantak Kumar, Purnamrita Sarkar NeurIPS, 2024 Paper link We analyze Oja's algorithm with thresholding for streaming sparse PCA, achieving near-optimal rates of convergence. |

|

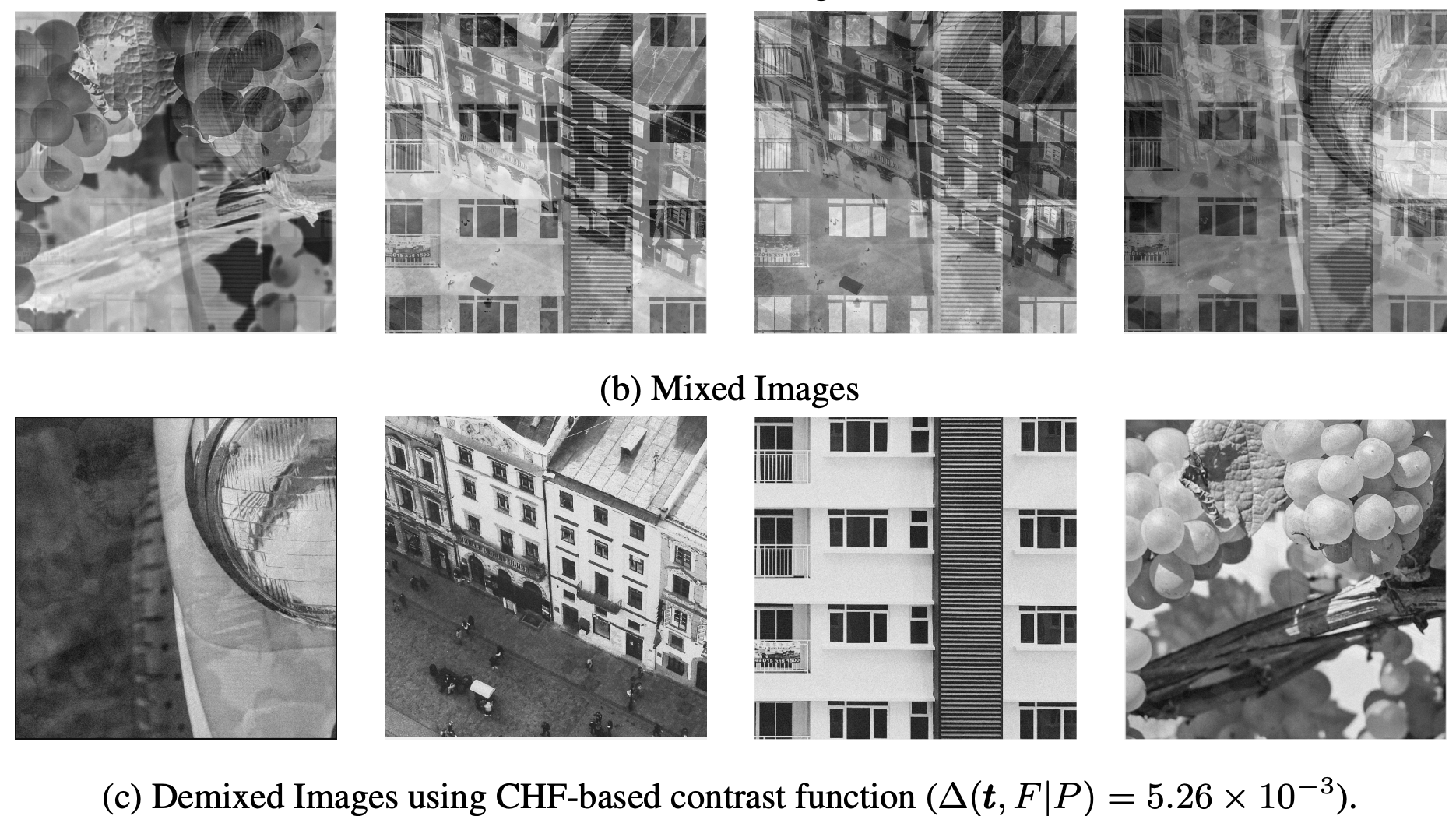

Syamantak Kumar, Purnamrita Sarkar, Peter Bickel, Derek Bean NeurIPS, 2024 Paper link We develop a nonparametric score to adaptively pick the right algorithm for Independent Component Analysis (ICA) with arbitrary Gaussian noise |

|

Arun Jambulapati, Syamantak Kumar, Jerry Li, Shourya Pandey, Ankit Pensia, Kevin Tian COLT, 2024 Paper link We theoretically analyze deflation-based algorithms for k-PCA using 1-PCA oracles. |

|

Syamantak Kumar, Purnamrita Sarkar, NeurIPS, 2023 (Spotlight) Paper link We propose a nearly-optimal streaming algorithm for performing PCA under Markovian dependence among the samples. |

|

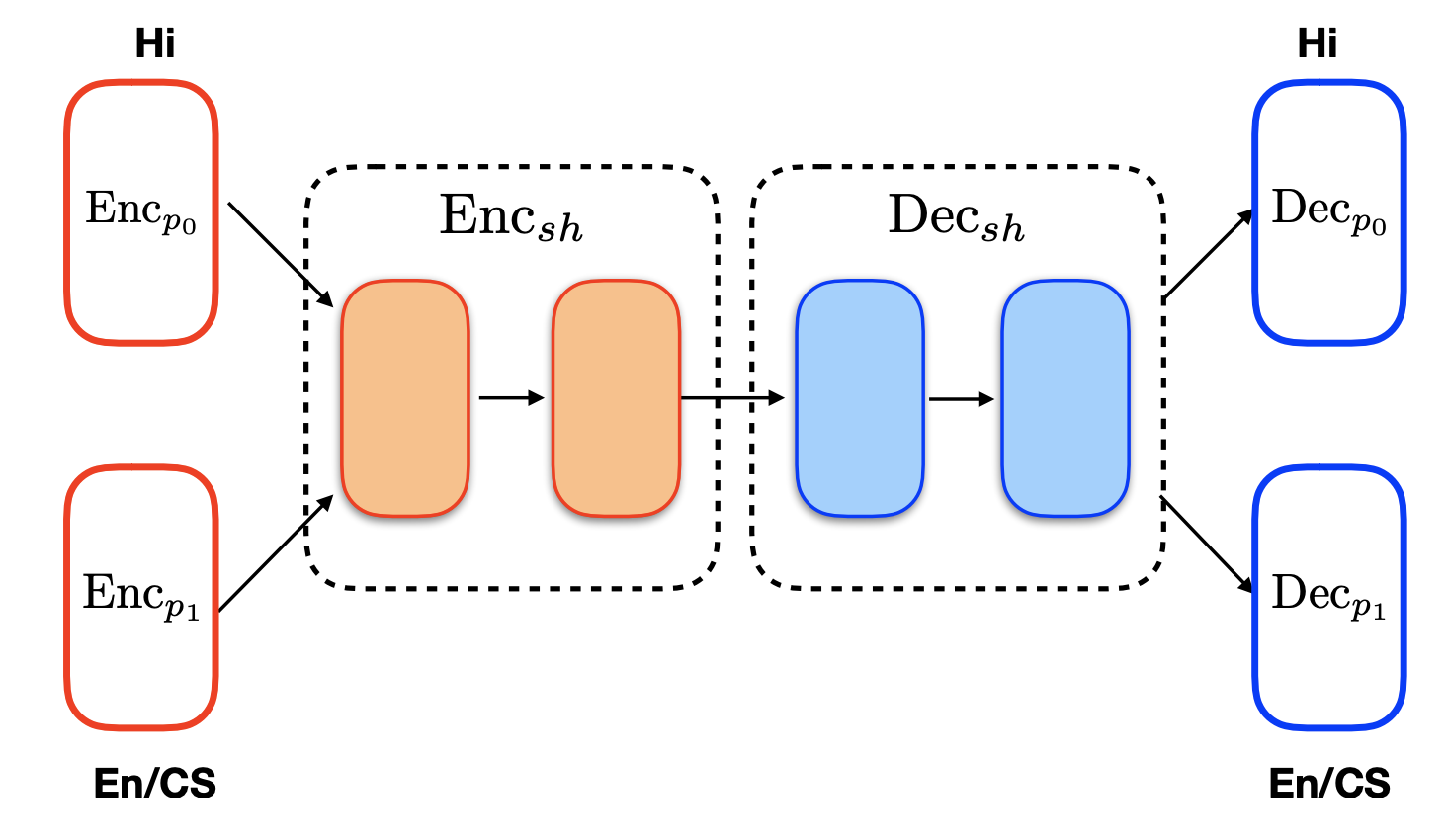

Ishan Tarunesh, Syamantak Kumar, Preethi Jyothi ACL-IJCNLP 2021 Paper link In this work, we adapt a state-of-the-art neural machine translation model to generate Hindi-English code-switched sentences starting from monolingual Hindi sentences. |

|

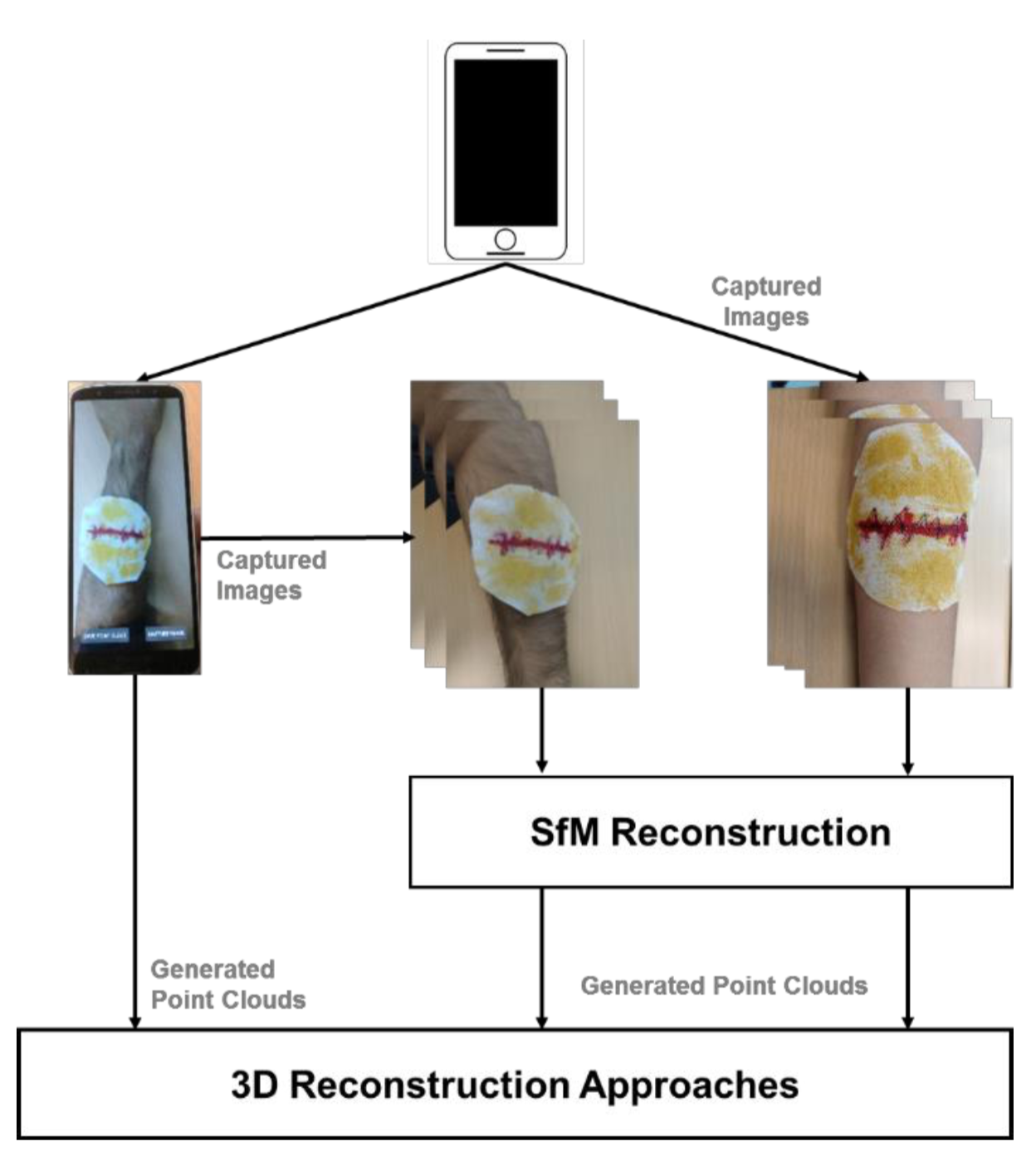

Syamantak Kumar*, Dhruv Jaglan*, Nagarajan Ganapathy, Thomas Deserno SPIE Medical Imaging Conference, 2019 Paper link We propose an android application for real-time three dimensional scanning of surface wounds to aid effective diagnosis of wounds remotely and present a comparative study of open-source libraries available for performing this task. |

|

|

For a complete list of projects, please refer to my CV.

|

I worked at Google, Bangalore with the Maps team on a model for predicting the correctness of an edit made by a user on Maps. This model is used to ensure that data displayed on Google Maps is true to the accuracy it claims. |

|

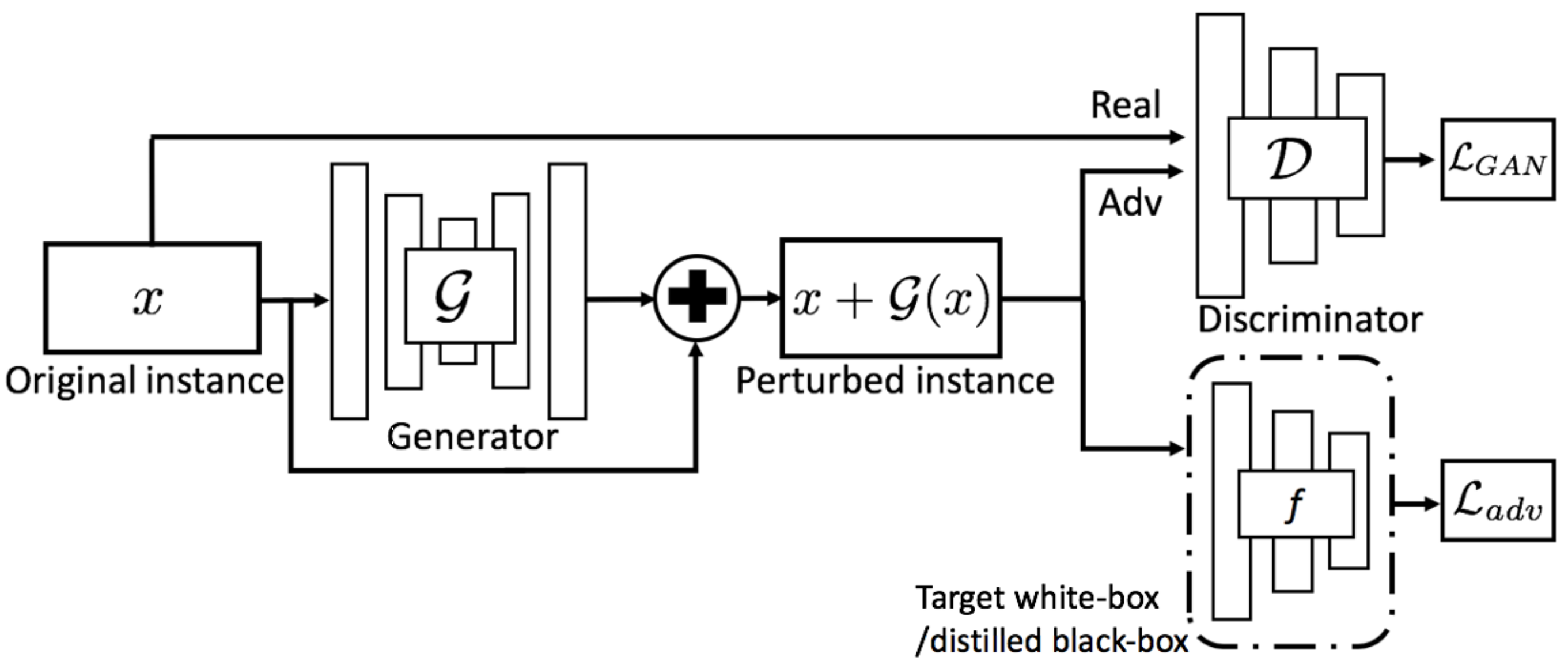

code / report Used Generative Adversarial Networks (GANs) to generate adversarial examples for keyword spotting systems, improving their robustness. |

|

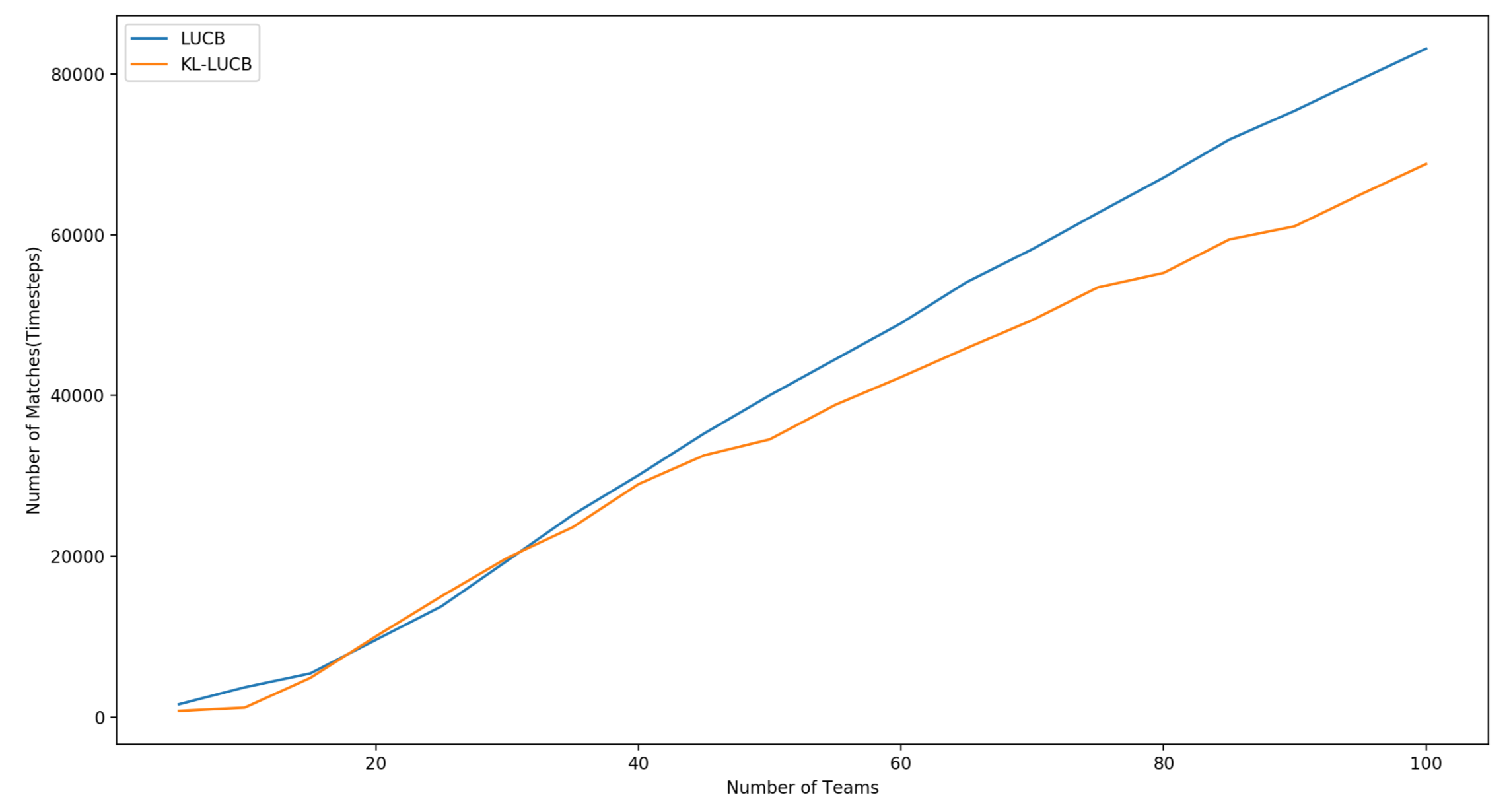

code / report Designed an algorithm for fully-sequential sampling in a Probably-Approximately-Correct (PAC) setting to determine top-K players in a tournament, given pariwise preferences |

|

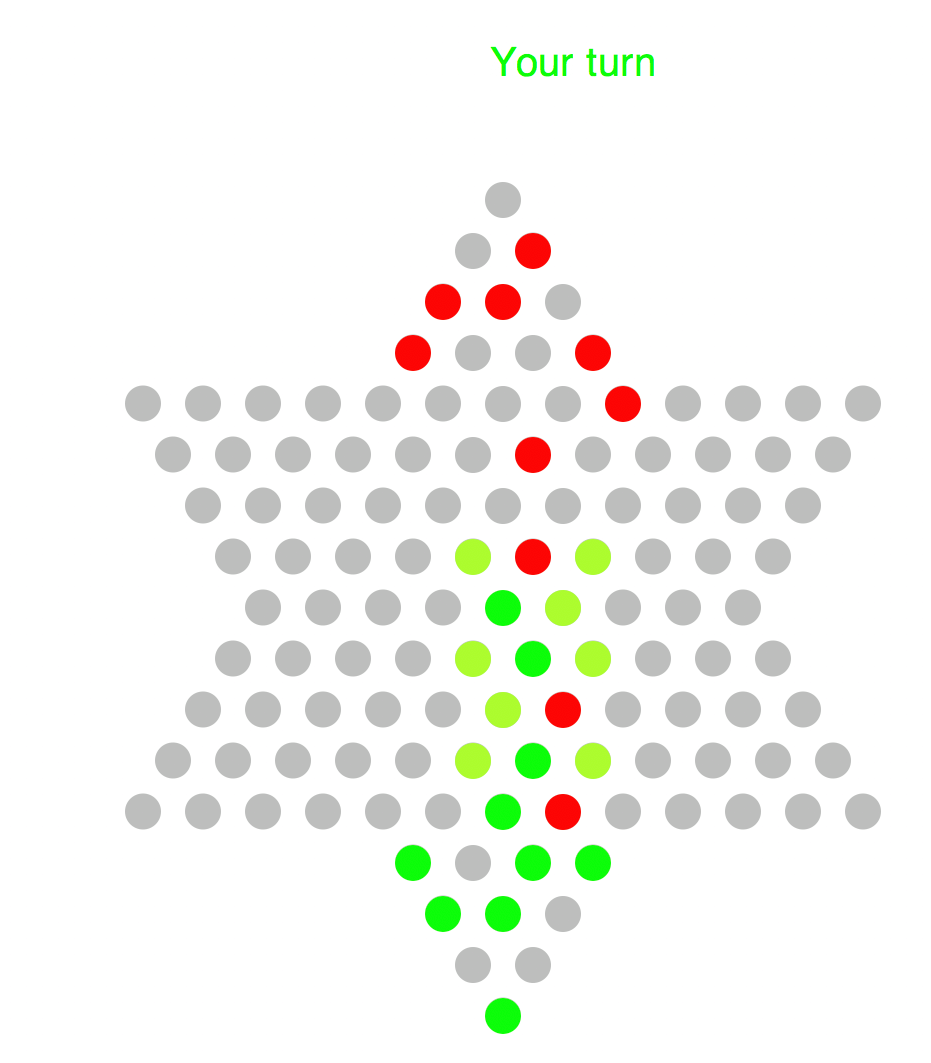

code Implemented an AI for playing Chinese Checkers using the Minimax algorithm with alpha-beta pruning. (Implemented in Racket) |

|

|

|

Undergraduate Teaching Assistant, PH107, Quantum Physics and its Applications, Fall 2017

|

|

Graduate Teaching Assistant, CS361S, Network Security and Privacy, Fall 2021

|

|

Graduate Teaching Assistant, EE 461P, Data Science Principles, Spring 2022

|

| Stolen this awesome template from Jon Barron. Thanks a lot for sharing! |